DIY Air Quality Sensor with Wireless Logging

September 2021

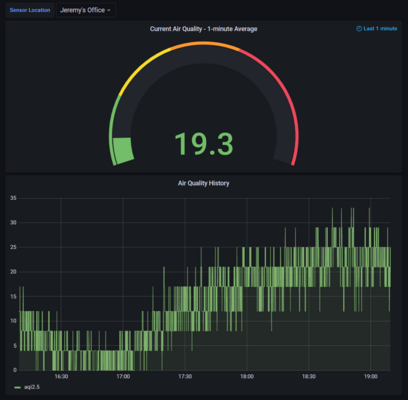

These days, summers in Seattle aren’t quite as nice as they used to be: summer is also wildfire season. The region is often blanketed with unhealthy levels of particulate. Sites such as PurpleAir are a great way to learn about the air quality outdoors, but I wanted an indoor sensor that would let me check how effective my HVAC’s filters are.

Many sensors just show the current air quality on a display built into the device. I wanted something fancier that transmits data wirelessly and stores it in a database, allowing me to see trends in historical data and monitor my air quality remotely. The PurpleAir sensor does this, but there are two problems:

-

It costs $200, a little too expensive to buy 2 or 3 to put around the house.

-

In the summer of 2020, when this project started, the wildfire season along the entire West Coast was so bad that almost all the “ready-to-go” sensor systems were sold out.

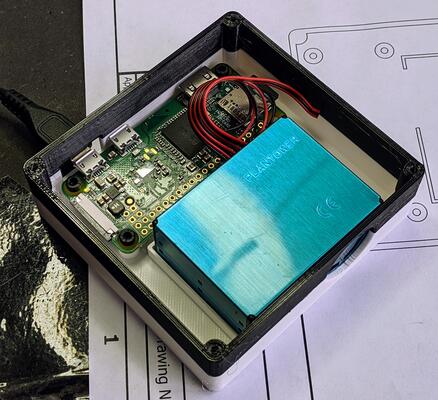

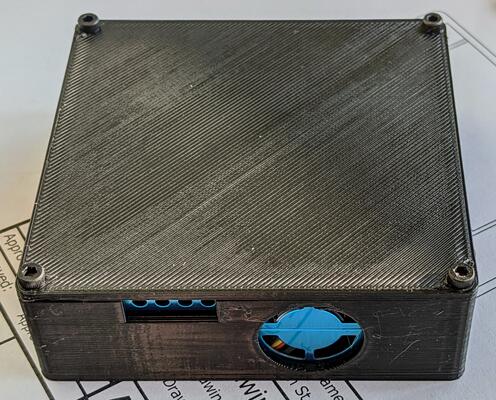

I solved both of these problems by building something myself. Although the full sensor systems were sold out, it was possible to buy a “raw” sensor – that is, something with wires coming out the back with data coming out of a serial port. I used the Plantower PMS5003 particulate sensor, which senses PM1.0, PM2.5 and PM10.0 particulates. I combined it with a tiny Raspberry Pi Zero W ($10), a 3D printed case I designed to fit the Pi and sensor, an open-source database (Postgres) to store data, and an open-source, web-based data visualization program (Grafana). I wrote some Python software to make everything run, and – voila! A complete air quality monitoring with wireless data logging and graphing for about $35.

The original version of my software simply logged data locally. It worked so well that I improved the software to be a distributed system instead. In other words, I split the software into two halves:

-

A client runs on the Raspberry Pi, reads data from the sensor’s serial port, timestamps each reading, and periodically sends a batch of data to the server over WiFi. If the server is unreachable, the client buffers data while continuing to acquire new readings. The backlog is uploaded once the server is reachable again.

-

A server receives data from all the sensors and inserts it into a database where it is available for later visualization or queries. The protocol runs over HTTP with TLS-based encryption to prevent snooping. The data format is simple json, with a couple of extra fields for a password-based authentication scheme to prevent unauthorized use of the server.

One interesting tradeoff was how often to send data. Sending data too often leads to high overhead; too infrequently means a potentially long delay before data is available for viewing. I ended up using HTTP’s persistent connection feature, which does a great job cutting down overhead. By doing a TLS handshake once instead of on every transaction, each batch of data requires just 3 TCP segments sent from client to server and 2 back the other way. I settled on sending data from each client every 15 seconds.

I was very pleased with the end result, and a few friends have started using it too! It’s been fascinating watching indoor air quality trends over time. The data has given me a good sense for how quickly the inside and outside air reach equilibrium if the HVAC is off (about 8 hours), how long it takes the HVAC to clean the air in my home when it runs (about half an hour), and what sorts of activities can cause particulate matter to spike (e.g., frying something in the kitchen). I also like that I maintain control over all my data, which would not be the case with an off-the-shelf logging sensor.

If you’d like to reproduce my work, my GitHub repository has all my software, 3D models for the case, and instructions on how to build and set up a sensor of your own. If you use it, please let me know!