High Precision NTP Client for the ESP32

Jeremy Elson, February 2022

On this page, I describe my experience building and characterizing an NTP client I built for the ESP32. My client was able to synchronize to UTC using an Internet time server about 4ms away with the following accuracy:

| Median error | 216us |

| 95th percentile | 801us |

| 99th percentile | 1,607us |

| 99.9th percentile | 2,461us |

Background

The ESP32 is a family of very low-cost but highly capable microcontroller chips that have become popular with hobbyists. You can buy a complete module on Amazon – dual-core, 160mhz, with built-in WiFi and bluetooth, plus a USB interface for programming – for about $7. The previous-generation ESP8266 is sold for the even more absurd price of about $4!

Lately I’ve been interested in trying to collect sensor data with an ESP32 and report it to a server over the Internet. Of course, I’d like the sensor readings to be properly timestamped. Although there are a few NTP libraries available for the ESP32, none that I found had a characterization of its performance. In addition, they all did “one-shot” synchronization, i.e., they use just one observation at a time to determine the offset of the local clock to the NTP timescale. They did not attempt to keep a history of observations and do any outlier rejection or correct for local clock rate error. I was convinced I could do better.

Implementation

I wrote an NTP client for the ESP32 that periodically sends a request to an NTP server and keeps the history of recent observations of the offset between the local clock and the NTP server’s clock. The one-way latency is assumed to be half the round trip time, minus the server’s reported processing delay and a calibration constant described below. When first starting up, it sends a request every 4 seconds until it has 10 responses. Then, in the steady-state, it sends a request every 60 seconds, keeping a history of the past 30 minutes of observations.

Each time a new observation is received, it performs simple least-squares linear regression on all the observations to find the best coefficients of a line that relates the local clock to the NTP timescale. The user interface to my library is simple – “get current epoch timestamp” – which works by reading the local clock and applying the linear model to predict the corresponding NTP timestamp. Linear regression has two good effects: first, it averages away noise in individual observations (assuming they are uncorrelated and unbiased). Second, it models the rate difference between the local clock and the NTP timescale, which significantly improves the accuracy even as the time since the most recent observation grows.

Reducing Timestamp Jitter

The ESP32 network stack is built on a lightweight TCP/IP stack called

LWIP. It has an API that

looks much like the Berkeley socket API, e.g. sendto() and

recvfrom() to send and receive UDP packets. Unfortunately, LWIP has

chosen not to propagate interrupt-time reception timestamps up to the

application as metadata. Early revisions of my NTP client did the same

thing that all the other ESP32 NTP clients do: record the time before

sending a UDP packet, and again when we read the data out of LWIP’s

buffers using recv(). This leads to high jitter (on the scale of 10s

of milliseconds), as timestamp acquisition is delayed behind all

packet processing, which itself is subject to the whims of the ESP32’s

FreeRTOS scheduler.

However, the ESP32 does offer a shortcut: the WiFi driver has a promiscuous mode in which user programs can receive a callback directly from the WiFi driver whenever a packet is received. This is meant for applications such as WiFi sniffing, but it has the nice side effect of giving me a low-jitter event to record packet reception times. In fact, the promiscuous mode callback contains wifi metadata including a reception timestamp acquired directly in the wifi hardware. Using that hardware timestamp would require some extra work to relate the wifi clock to the system clock, but could be another source of jitter reduction that I have not implemented.

There seems to be no similar way to get precise send-time timestamps.

High RTT Filter

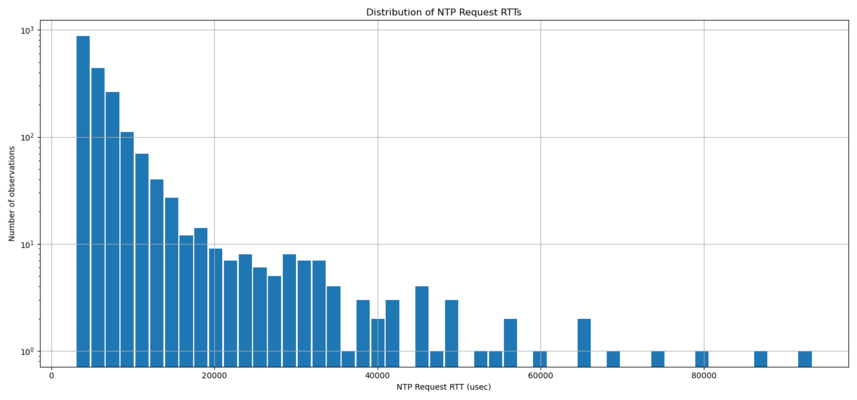

My tests were all against a public Stratum 2 NTP server run by the University of Washington. From my home in downtown Seattle, the RTT to that server from a Wifi-connected laptop is about 4ms. However, despite using promiscuous mode to reduce jitter on the receive timestamps, my client experienced a long tail of jitter in the RTTs, as seen in the graph below:

Since my laptop does not see this jitter, it is likely coming from some part of the ESP32’s network stack or WiFi hardware. An evaluation of the total synchronization error before and after the low-jitter receive timestamping changed the error from being symmetrical to highly one-sided. This suggests that the remaining jitter is primarily variability on the sending side, i.e., the time it takes the packet to get through the LWIP network stack and underlying WiFi hardware.

Of course, these sorts of delays contribute directly to error. As a simple filter, my client discards observations in the top quartile of RTTs before feeding the remainder into the linear regression algorithm.

Latency Asymmetry Correction

As people familiar with NTP know, its accuracy depends on symmetry in the forward and reverse path latencies. Only the round-trip-time can be measured directly, so we infer that the one-way latency is half the RTT. This assumption is only true if the forward and reverse latencies are equal, and any asymmetry in those two latencies contributes directly to error. This more-or-less works on the Internet, and though sometimes packet queues will increase the latency in one direction or the other, in the long-term it’s often an unbiased estimate.

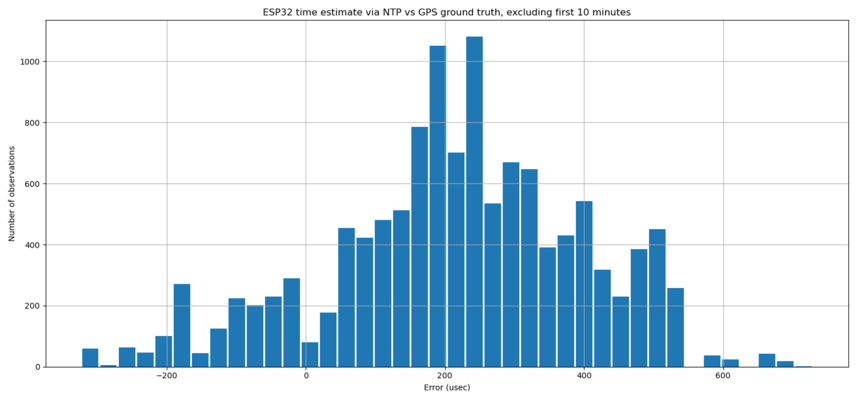

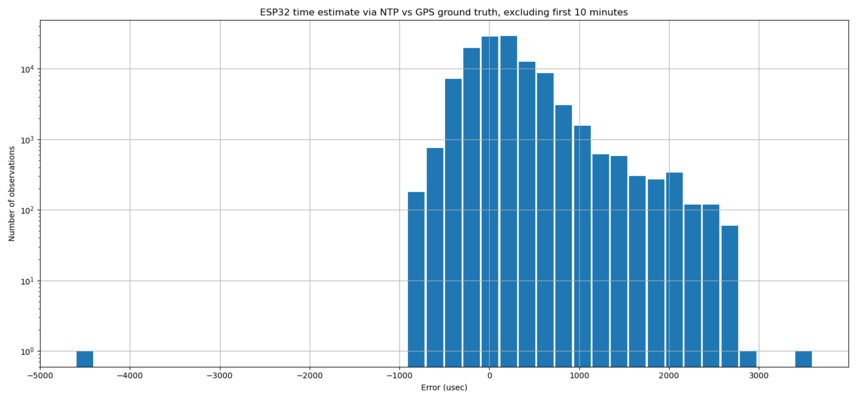

My early testing indicated that the forward and reverse path latencies were not the same. That is, when I compared the NTP-acquired timestamp to ground truth in a long-term test (as described in the next section) I found the errors were not centered around zero, but were biased in one direction with a median of about 200us, as seen in the histogram below:

I suspect this is a difference in the time required to send a packet through the ESP32’s stack vs the time required to receive it. I chose to calibrate this asymmetry out, by subtracting 200us from the latency estimate as a “hard-coded constant”.

Evaluation

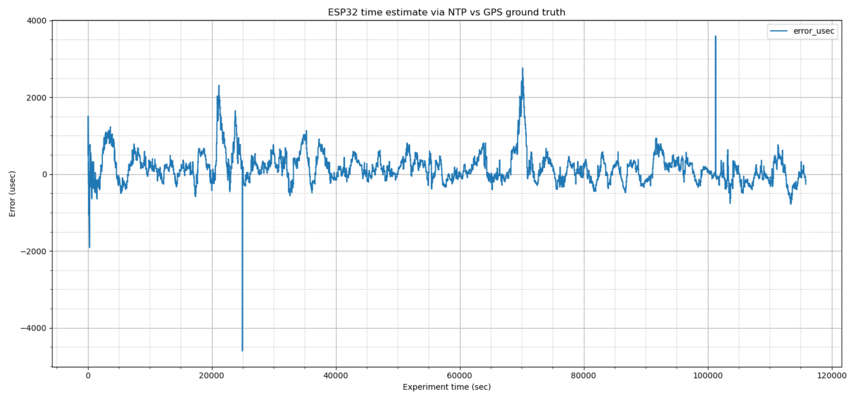

I evaluated my NTP client by using it to timestamp the PPS output of a uBlox M10 GNSS. My antenna has a view of about 180 degrees of sky. The uBlox is configured to listen to the GPS, Galileo and GLONASS constellations, and during most of this test was using about 20 satellites for its solution. Once per second, at the top of each UTC second, the GNSS generates a pulse, which I wired into one of the ESP32’s GPIO pins. A GPIO interrupt handler on the ESP32 gets the current timestamp from the my NTP service and emits it to the serial port. I record the results and compute the error in the reported timestamp, e.g., a reported timestamp of 1644310833.999917 has an error of 83 microseconds.

During my tests, the ESP32 sent an NTP request to the University of Washington’s public Stratum 2 NTP server over the internet every 60 seconds. The nominal RTT to that server from my home via WiFi is about 4ms. I collected over 100,000 data points.

This graph shows time-series data of the errors over the course of the experiment:

Here is a histogram of the absolute errors recorded, excluding the first ten minutes of warmup. The median is close to 0, thanks to the calibration constant described in the previous section. Note that the long tail of errors is one-sided (other than some outliers). As described earlier, this is because I can acquire low-jitter reception timestamps but not for send-time timestamps.

Numerically, abs(error) can be summarized as:

| Median error | 216us |

| 95th percentile | 801us |

| 99th percentile | 1,607us |

| 99.9th percentile | 2,461us |

Drawbacks

My implementation has several drawbacks compared to a real NTP

client. Perhaps foremost is that it makes no effort to create a

monotonic timescale. Every time a new observation arrives, a new

linear regression is performed, and all time queries immediately start

using that new model. This can result in a discontinuity in the

timescale, e.g., two sensor observations collected 10ms apart might

have timestamps that differ by significantly more than 10ms, or even

be timestamped in the wrong order. This is in contrast to the

reference NTP client which gradually slews the clock with the goal of

keeping the local timescale continuous and keeps the instantaneous

rate close to SI seconds, even while adjustments are happening. The

ESP32 does support an adjtime() call so a continuous timescale should

be possible.

Another drawback is that my outlier rejection is not very sophisticated, and least-squares fitting is very sensitive to big outliers. The next step, if I ever return to this project, would be an iterative algorithm that draws a linear regression line, looks for outliers, discards them, and redraws the regression line with the remaining points.

Summary

Despite being cheap hardware, and having a slow network stack that suffers from high jitter, it’s possible for an ESP32 to get a view of UTC typically accurate to under 1 millisecond using only an Internet NTP server accessed using its built-in WiFi. Though worse than the performance one would expect from a desktop computer, it’s in the same ballpark, and could be improved by investing more time into better outlier rejection.